The Material Cost of AI

The Material Cost of AI

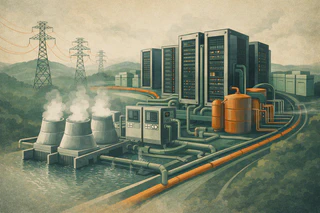

AI is frequently presented as if it were an abstract layer of intelligence floating above the physical world. That framing is convenient, but false. Training and serving large-scale models depends on data centres, power generation, transmission networks, water-intensive cooling systems, semiconductor supply chains, and land use decisions. Every claim that AI is becoming faster, bigger, or more widely deployed implies a set of material demands somewhere else. To understand that cost honestly, it helps to combine global energy projections, national infrastructure estimates, and research on water use rather than relying on a single headline number.

The scale is already substantial. In its 2025 report Energy and AI, the International Energy Agency estimated that data centres consumed about 415 terawatt-hours (TWh) of electricity in 2024, equivalent to roughly 1.5% of global electricity demand. The same report notes that data-centre electricity consumption grew at about 12% per year over the previous five years. Under the IEA’s base-case projection, global data-centre demand rises to roughly 945 TWh by 2030. In the United States, the report estimates data-centre use at about 540 kWh per person in 2024, climbing to more than 1,200 kWh per person by 2030.

National evidence suggests this is not an abstract global average but an immediate infrastructure issue. Lawrence Berkeley National Laboratory reported in January 2025 that U.S. data centres consumed about 4.4% of total U.S. electricity in 2023 and could rise to between 6.7% and 12% by 2028. The same report estimates U.S. data-centre electricity use rose from 58 TWh in 2014 to 176 TWh in 2023, and could reach 325 to 580 TWh by 2028. That is a dramatic increase on a short timeline, and the lab explicitly links much of the recent acceleration to AI servers and their cooling demands.

Electricity is only part of the story. Water demand is another material constraint that is often hidden from public debate. The paper Making AI Less “Thirsty” estimated that training GPT-3 in Microsoft’s U.S. data centres could directly evaporate about 700,000 liters of clean freshwater, and argued that a 500 mL bottle of water can correspond to roughly 10 to 50 medium-length GPT-3 responses depending on time and location. The broader point is not that one prompt has a single fixed water cost. It is that water use varies by local climate, cooling design, and power generation mix, meaning the same AI workload can impose very different burdens in different places.

That caution is important because the environmental literature is still evolving. A 2021 review in npj Clean Water emphasizes that data-centre water consumption remains under-measured and hard to compare because reporting standards are inconsistent and key operational data is often unavailable. In other words, the environmental cost may be significant, but the public still lacks the transparency needed to evaluate it cleanly. That lack of transparency is itself part of the governance problem.

These numbers matter because infrastructure burdens are never evenly distributed. When electricity demand rises quickly, utilities need new capacity, transmission upgrades, or load-management strategies. That can affect prices, grid reliability, and which regions are prioritized for industrial expansion. Communities living near major facilities may bear noise, water, and land-use tradeoffs, while the economic upside flows elsewhere. The political language of “AI leadership” can obscure who is expected to absorb those costs.

There is also a governance issue. When firms announce ever larger models and always-on AI features, the public rarely sees a clear accounting of the corresponding infrastructure demand. Product launches are framed as breakthroughs in capability, not as decisions that commit energy systems and local resources. That makes democratic scrutiny harder. People are invited to debate whether the outputs are useful, but not whether the underlying build-out is justified, equitable, or sustainable.

None of this means AI development should stop. It means the conversation has to mature. If an application creates real public value, then its energy and infrastructure requirements should be assessed openly, with the same seriousness applied to transport, housing, or heavy industry. Efficiency improvements are important, but they do not eliminate the need for accountability about absolute demand.

The broader concern is that “digital” has become a rhetorical way of hiding material extraction. AI is not magic. It is computation running on physical systems that draw power from grids and resources from real places. The IEA shows the global trajectory, Berkeley Lab shows how fast local grid pressure is already changing in the United States, and water-footprint research shows that environmental impacts cannot be reduced to electricity alone. Any honest evaluation of AI progress has to include those physical costs, because they are part of the technology, not external to it.

Sources

- International Energy Agency. Energy and AI, 2025. https://www.iea.org/reports/energy-and-ai/energy-demand-from-ai

- Lawrence Berkeley National Laboratory. “Berkeley Lab Report Evaluates Increase in Electricity Demand from Data Centers.” 15 January 2025. https://newscenter.lbl.gov/2025/01/15/berkeley-lab-report-evaluates-increase-in-electricity-demand-from-data-centers/

- Shaolei Ren et al. “Making AI Less ‘Thirsty’: Uncovering and Addressing the Secret Water Footprint of AI Models.” arXiv:2304.03271. https://arxiv.org/abs/2304.03271

- Mytton D. “Data centre water consumption.” npj Clean Water 4, 11 (2021). https://www.nature.com/articles/s41545-021-00101-w